After a pause I am working again on my semi-automated podcast transcription project. The first part involves evaluating the quality of various methods of transcription. But how?

In this post I’ll explore how I’ve been comparing transcripts to evaluate transcription services. I’ll include the results for some human-powered services. I’ll write up the results for automated services in a later post.

Accuracy

The key metric for transcription is accuracy. How closely the words in the generated transcript match the spoken words in the original audio.

To compare the words in the transcript with the audio you need a reference transcript that’s deemed to be completely accurate; a ground truth upon which comparisons can be based. Then the sequence of words in that reference transcript can be compared against the sequence of words in the hypothesis transcript from each system being compared.

Word Error Rate

The Word Error Rate (WER) is a very simple and widely use metric for transcription accuracy. It’s a number, calculated as the number of words that need to be changed or inserted or deleted to convert the hypothesis transcript into the reference transcript, divided by the number of words in the reference transcript. (It’s the Levenshtein distance for words, measuring the minimum number of single word edits to correct the transcript.) A perfect match has a WER of zero, larger values indicate lower accuracy and thus more editing.

Of course there are other metrics, and arguments pointing out that not all words are equally important. For our purposes though, the simplicity of WER is very appealing and widely used in the industry.

One Word Per Line

I decided early on that I’d simply convert a transcript text file into a file containing one word per line, and then use a simple diff command to identify the words that need to be changed/inserted/deleted, and the diffstat command to count them up.

Simple, in theory. In practice a significant amount of ‘normalization’ work, which I’ll describe below, was needed to reduce spurious differences.

Visualizing the Differences

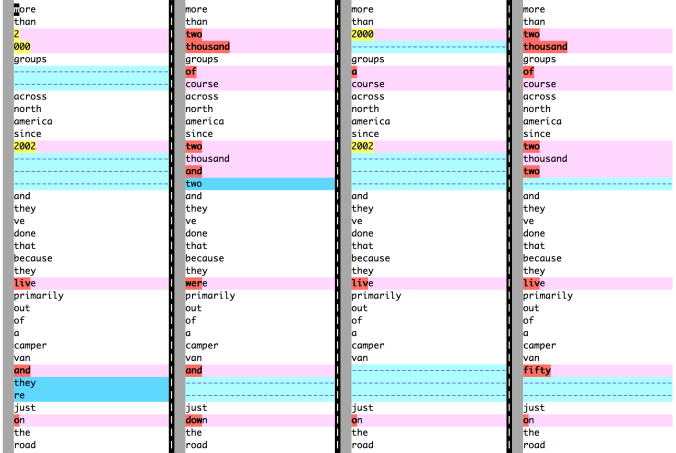

A very useful command for inspecting and comparing these ‘word files’ is vimdiff. It gives a clear colour-coded view of the differences between up to four files.

Here’s an example comparing word files that haven’t been normalized. The left-most column is the transcript produced by a human volunteer. The other three columns, from left to right, were generated by automated systems (in this case VoiceBase API, Dragon by Nuance, and Watson by IBM).

This very small section of the transcript has several interesting differences.

Before I talk about normalization I want to draw your attention to the second column, the automated transcript by VoiceBase. Note the “two thousand and two” vs “two thousand two” in the fourth column, and “just down the road” vs “just on the road” in the other three columns. The phrases “just down the road” and “two thousand and two” would be more common than the alternatives, yet the alternatives are correct in this case.

I suspect this is an example of automated services giving too much weight to their training data when selecting the best hypothesis for a sentence. (It’s a similar situation to autocorrect correcting your miss-spellings with correctly spelt but inappropriate replacements.) A key point here is that, because the chosen hypothesis is likely to read well, it’s harder for a human to notice this kind of error.

Normalization

You can see from the vimdiff output above that numbers can cause differences to be flagged even though the words convey the correct meaning: “2,000” vs “2000” vs “two thousand”, and “2002” vs “two thousand and two” vs “two thousand two”. To address this I wrote some code to ‘normalize’ the words. For numbers it converts them to word form and handles years (“1960” to “nineteen sixty”) and pluralized years (“1960s” to to “nineteen sixties”) as special cases.

Words containing apostrophes are another case of differences: I’m splitting on any non-word character so “they’re” is being split into two words. Some transcription systems might produce “they are” (not shown in this example). Apostrophes are tricky. To address this the normalization code expands common contractions, like “they’re” into “they are”, handles some non-possessive uses of “‘s” and removes the apostrophe in all other cases. It’s a fudge of course but seems to work well enough.

Another significant area for normalization are compound words. Some people, and systems, might write “audio book” while others write “audiobook” or “audio-book”. To address this the normalization code expands closed compound words, like “audiobook” into the separate words “audio book”. It seemed be too much work to generalize this so the normalization code has a hard-coded list that detects around 70 compound words that I encountered while testing. (Remember that the goal here isn’t perfection, it’s simply reducing the number of insignificant differences for my test cases so the WER scores are a more meaningful.)

Other normalizations the code handles include ordinals (“20th” becomes “twentieth”), informal terms (“gotta” becomes “got to”), spellings (“realise” becomes “realize”), and some abbreviations are collapsed (“L.A.” becomes “LA”).

Transcripts Produced by Humans

For my evaluation I chose a podcast episode of just under two hours length, that had good quality audio, and already had a transcript produced by a volunteer. The primary voice was an American male who spoke clearly but quickly and without a strong accent.

I suspected that a single human-produced transcript wouldn’t be sufficiently reliable so I ordered human-produced transcripts from three separate transcription services. This turned out to be more interesting, and more useful, than I’d anticipated.

- 3PlayMedia “Extended (10 Days)”

- Rate $3/min = $333.44 total.

- Completed in 7 days.

- Seven “flags” in the transcript, mostly “inaudible” or “interposing voices”.

- Scribie “Flex 5 (3-5 Days)”

- Rate $0.75/min = $83.35 total.

- Completed in 3 days. Formats: TXT, DOC, ODF, PDF. (No timecodes)

- VoiceBase “Premium 99%, 5-7 Days, 3 human reviews”

- Rate $1.5/min = $168.00 total.

- Completed in 4 hours. Formats: PDF, RTF, SRT (timecodes).

- Note that VoiceBase provide both human and automated transcription services.

The 4:1 difference in cost is notable! 3PlayMedia certainly charge a premium. Let’s see how their transcripts compare.

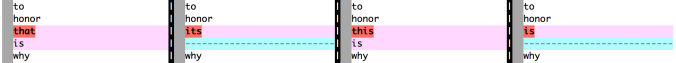

With the normalization I was expecting these transcripts, all produced by humans, to be closer to each other than they turned out to be. Here’s an example of some differences:

(In this vimdiff image, and all the ones that follow, the columns from left-to-right are: Volunteer, 3PlayMedia, Scribie, VoiceBase.)

That shows three different transcriptions of the “to use” phrase. It’s not very clear on the audio and I’d agree with the majority here and say that “you used” was correct. In this example it isn’t significant but it does illustrate that imperfect human judgement is needed when the audio is isn’t completely clear. Transcribers have to write something and can’t easily express their degree of confidence. If it falls too low they might write something like “[inaudible]” or “[crosstalk]”, but above that threshold they take a guess and you don’t know that. And because the guess is likely to read well it’s harder for a human to notice this kind of error.

The second difference shown in that image relates to the difference between a clean transcript and a verbatim transcript. In a clean transcript conformational affirmations (“Uh-huh.”, “I see.”), filler words (“ah”, “um”) and other forms of speech disfluency aren’t included.

That “you are” is in the audio, so would be in a verbatim transcript, but three of the four humans decided that it wasn’t significant and should be left out of the clean transcript. But one of the four decided it should be kept in. Other common examples include “I mean” and “you know”. There’s no right answer here, it’s a judgement call case-by-case.

It works the other way as well. Sometimes the transcriber will add a word or two that they think makes the text more clear. Compare “everything submits to and is accountable to” with “everything submits to it and is accountable to”. Two of the four humans decided to add an “it” that wasn’t in the audio. Similarly “believe it” vs “believe in it”, here again two of the four added an “in”, only this time it was not the same transcribers adding the word. Transcribers are likely to “clean” transcripts in a way that’s biased towards their own speaking style. Speakers have a distinct verbal style and changes like these by a transcriber can be more distracting than helpful if not in keeping with the speakers own style.

Generally these interpretations of the audio, and writing the corresponding text, are made with care and don’t alter the meaning for the reader. At least that’s what I was thinking until I came across this:  To be fair this part of the audio is a little garbled due to crosstalk. I’m sure the speaker said “doesn’t matter” (which got normalized to “does not matter”). It’s another example of where the lack of confidence indicators in human transcripts is a problem.

To be fair this part of the audio is a little garbled due to crosstalk. I’m sure the speaker said “doesn’t matter” (which got normalized to “does not matter”). It’s another example of where the lack of confidence indicators in human transcripts is a problem.

Here are a few other examples of differences in these human transcripts that caught my eye:

Getting to Ground Truth

To compare transcription services I needed a reference transcript – a ground truth – against which to compare the others.

Since the transcripts varied significantly I had little choice but to create my own ‘ground truth’ transcript manually. I copied the transcript generated by the volunteer, then listened to the audio in the places where the various transcripts differed in a non-obvious way – well over 200 of them. For each place I decided what the most accurate transcription was and edited the ground truth transcript to match. The most difficult places were where multiple speakers talk over one another. It’s very hard to convey the intent clearly and accurately in a linear sequence of words. Often ‘clearly’ and ‘accurately’ are at odds with each other.

I repeated this process with the automated transcripts in order to add-in the disfluencies and conformational affirmations etc. present in the audio. In other words, to shift the ground truth transcript from a ‘clean’ transcript to being closer to a ‘verbose’ transcript. Without that work the apparent Word Error Rate of the automated transcripts would be unfairly higher. They would all be equally effected but that effect would reduce the visibility of the genuine differences. (With hindsight I should have used a separate file for this step but the overall process was iterative and exploratory rather than the linear sequence outlined here.)

The final ground truth transcript has 21,629 words.

Other Attributes

Speaker Diarisation

The transcripts produced by the volunteer and by Scribie identified the speakers. The transcript from Voicebase identified transitions from one speaker to another, but didn’t identify the specific speaker. The transcript from 3PlayMedia didn’t identify speakers or transitions, despite costing three to four times as much.

Quality Flags

3PlayMedia flagged seven places in the transcript with [? … ?] where the transcriber was unsure of the words but had made a reasonable guess, plus three instances of [INTERPOSING VOICES] and nine [INAUDIBLE]. Voicebase flagged five [CROSSTALK] and two [INAUDIBLE]. Scribie flagged none.

Most of 3PlayMedia transcription text flagged as unsure were correct. About half of the INTERPOSING VOICES and INAUDIBLE in the 3PlayMedia transcript the other services had accurate transcriptions for.

Segmentation / Punctuation

The volunteer transcript had 842 sentences in 158 paragraphs.

The 3PlayMedia transcript had 1259 sentences in 331 paragraphs.

The Scribie transcript had 915 sentences in 223 paragraphs.

The Voicebase transcript had 1077 sentences in 648 paragraphs.

I’m not sure what to make of those wide differences. The figures are a little noisy due to artifacts in the way the files were processed, but most the differences seem to be due simply to style.

The figures for the automated systems (in a future post) highlight those which do a very poor job of segmentation. The big downside of Dragon by Nuance, for example, is that it doesn’t do segmentation. You simply get a very long stream of words. So you’ll still have a lot of work to do to make them usable, no matter how accurate they might be.

Results for Human Transcription

Service WER Diarisation Timing Cost ========== === =========== ====== ======= 3PlayMedia 4.5 None Subtitles $333.44 VoiceBase 4.6 Transitions Subtitles $168.00 Scribie 5.1 Speaker names Paragraph $ 83.35 Volunteer 5.3 Speaker names None N/A

The important number here is the Word Error Rate (WER). Lower is better. The difference between 4.5 and 5.3 is quite small in practice. Most of the ‘errors’ are in parts of the transcript are due to insignificant differences or ambiguous sections due to cross-talk.

I suspect a WER around 5 represents a reasonable ‘best case’ for transcription of an interview. For comparison, the best of the automated transcription services I’m testing have WER of 12 to 16, with some in the 30 to 40 range.

All this work was to understand how to judge the accuracy of a transcription in order to evaluate automated systems. Comparing human transcription services turned out to be a useful approach to understanding the issues and help develop the tools.

It’s clear that for the highest accuracy it’s very helpful to use more than one service and check the places where they differ. Of course that significantly increases the cost and effort.

I’m testing a number of automated systems currently and I’ll include those results in a later blog post.

Pingback: Semi-automated podcast transcription | Not this...

This is a really interesting piece. We actually did a similar experiment in January to research human preference, rather than accuracy. The eventual goal being to train towards more accurate automated systems.

View at Medium.com

IBM Watson cloud api seems to be much more robust to noise and low bit rate.

I compared IBM Watson vs Google Cloud on reference documents I recorded in a quiet room using a handheld microphone. I found the deciles of the bleu scores over the reference transcripts to be fairly comparable. However, when evaluated on audio having background noise and obtained using lower quality microphones, the word count deciles were much different. IBM is much more likely to provide longer transcript text.

Here’s the code I used in my comparisons :

https://github.com/pluteski/speech-to-text/blob/master/compare_transcripts.py

Interesting. IBM Watson didn’t score well in my testing. That might be due, in part, to them not supporting MP3 audio so I had to transcode it. (I tried mono 16KHz FLAC but the resulting file was larger than their max upload size, so I used the Ogg Opus codec instead.) That transcoding is bound to have reduced the quality.

I wonder if it has to do with your recordings being less noisy — most of mine have significant ambient noise. IBM Watson has a narrowband model that can be used in case of low-bit rate or where broadband just doesn’t generate anything useful, whereas I don’t know of any analogous setting in Google for dealing with low-bit rate noisy content.

BTW — if you haven’t already you might like the interview of Deepgram on Twiml. https://twimlai.com/from-particle-physics-to-audio-ai-with-scott-stephenson/ Apparently they’ve decided to go with phonemes for indexing rather than words.

I recently evaluated simonsays.ai – which uses Watson as their backend service – and the results were consistent with my previous testing: a WER of 25.7 vs 25.2. That’s about twice the error rate of the best automated services I’ve tested (12-16). Perhaps Watson shines better with lower quality audio.

When I tested Deepgram, back in December 2016, they had a very poor WER of 40.1. Perhaps they’ve improved since. I like the approach they’re taking and the fact they’ve Open Sourced their core code. Indexing phonemes is an interesting approach. I’ll aim to reevaluate them before I post the overall results.

Thanks.

Hi Tim, I was trying out simonsays.ai and comparing it with the output of Watson and I’ve found differences in transcriptions for the same input. Where did you find out that simonsays.ai uses Watson for their transcription service? Thanks!

Dave

I can’t recall where I got that from, and can’t find it now, so feel free to ignore. I can say that the word error rates from the two services are similar in my testing (both ~25), and not very good compared to the best systems. I’m still waiting to find time to finish my testing, just a couple of services left. I can say that services like Trint, Speechmatics, Sonix, Scribie, YouTube, VoiceBase, and Tremi have much better word errors rates in the 12 to 18 range.

Did you ever try Google Cloud speech V1,0 GA and Micorsoft Bing Speech API?

Thank you for the extensive review of these services. Have you had a chance to check out Rev, professional human transcription, or Temi, automated transcription? They are similar services to the ones you listed but cheaper.

Thanks for sharing your thorough write-ups –

As a fellow podcast junkie and software developer… I’ve also felt the pain with lack of searchability and sharability around podcasts, and am also working to solve this problem…

I’ve recently released a system for “long audio alignment” at word level. It takes audio and transcript as input, and outputs word-level timecodes.

It’s currently being used to power this “immersive listening” proof of concept:

http://www.listensynced.com/episode/ycombinator-elizabeth-iorns-on-biotech-companies-in-yc

(More examples here: http://www.listensynced.com/ycombinator)

A great use case for this technology is to combine it with one of the cheaper transcription options to additionally obtain granular timecodes.

Alignments are performed in ~3% of audio runtime (i.e.~2 minutes of processing per hour of audio). Meaning, once you have a basic text transcript, interactive transcripts can be generated within just a few minutes as a “post-processing” step!

Anyone is encouraged to be in touch if they’re looking for: 1) embeddable interactive transcripts, or 2) a RESTful API for Long Audio Alignment.

Cheers,

Levi

levi@futurestuff.co

Pingback: Transkript für Logbuch Netzpolitik #232

Hi Tim,

We at Scribie just launched our own automated transcription service. Please do try it out once.

https://scribie.com/transcription/free

Thanks!

Just tried it. I’m happy to report that you’re in the top-tier. More details when I write up the results.

Do you know a software that I could use two compare the original (good file) against the transcript file?

Tim, the other matter is accent/dialect of course. You are welcome to try us out (for free for your testing – just let us know first so we can credit you an agreed set of minutes) as we do manage a very wide range of English accents globally – for a custom solution go to https://waywithwords.net and for our first stage human to the hybrid model (we are still choosing a valid machine partner to work with for this model) try https://nibity.com/

Hi Tim, When do you plan to release your results?

The long gaps have meant that the landscape keeps changing under me! Most recently, and dramatically, with Google’s Speech-to-Text product update on April 9th. I’m working on the post again now. I’m mostly away for the next two weeks though, and any estimate I could give would be a hostage to fortune. I can tell you that Google is (now) ranked top :)

Pingback: A Comparison of Automatic Speech Recognition (ASR) Systems | Not this…