NYTProf development rolls on, with 2.07 being another significant release.

Here’s a summary of highlights from the many improvements.

Windows, at last

Windows wizard Jan Dubois has contributed patches that enable NYTProf to run on Windows. Many thanks Jan!

Corrected Subroutine Total Time

A significant error in time reported as spent in a subroutine has been fixed. It was showing the sum of the inclusive and exclusive time instead of just the inclusive time.

String Evals

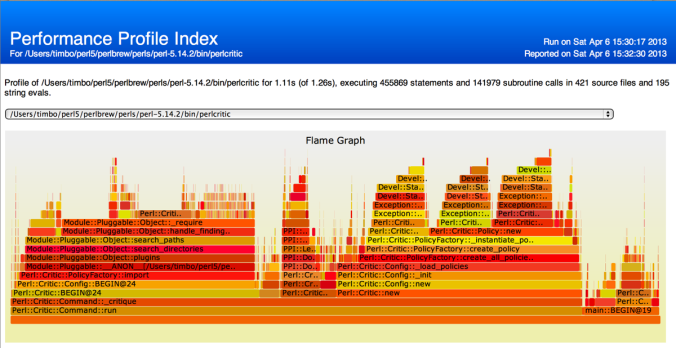

One of NYTProf’s innovative features is tracking of subroutine calls by calling location. In earlier versions you couldn’t see calls made from within string evals. Now you can. Even for evals within evals:

That’s a big step forward for profiling code that makes heavy use of evals.

(The use of the µ micro symbol is also new – part of some helpful interface polishing contributed by Gisle Aas. They’re not used on the statement timings yet, but will be at some point.)

Recursion

Previously the ‘inclusive time’ reported for a subroutine wasn’t useful for subroutines called recursively. The overall inclusive time included the inclusive time of nested calls, typically inflating it beyond usefulness.

Now the inclusive time only measures the inclusive time of the outermost calls. NYTProf now also tracks and reports the maximum call depth for each calling location.

Disabling the Statement Profiler

NYTProf started life as a statement profiler. I added a subroutine profiler later and was delighted with how well that worked out. The subroutine profiler is novel in a few ways and also extremely robust and efficient. Part of the efficiency comes from not streaming data to disk while the program is running. It accumulates the counts and timings in memory.

When profiling large and/or performance sensitive applications the costs of NYTProf’s statement profiler, in performance impact and disk space, can be prohibitive. So now I’ve added an option to disable the statement profiler. Setting the stmts=0 option turns NYTProf into a very lightweight subroutine profiler.

Caller Information for XSubs

NYTProf reports time spent in subroutines broken down by calling location. It’s one of its key features. The caller details are embedded as comment annotation into the report for each perl source code file.

That has worked very well for perl subs, but there’s no natural home for XS subroutines because there was no source code file available to annotate.

There’s a partial solution included in NYTProf 2.07: XS subs that are in the same package as profiled perl subs, get annotated subroutine ‘stubs’ appended to the report for the corresponding perl source file.

It works quite well, in particular the common UNIVERSAL methods get annotated:

Also, now these subs have a place in the reports, any references to them are hyper links, naturally.

Sadly it doesn’t work so well for packages that don’t contain any perl subs, like DBI::st. I plan to add per-package report pages for them in a future release.

Clock Docs

I’ve greatly expanded the description of the various clocks available for profiling: POSIX real-time clocks, gettimeofday(), and Time::HiRes.

I’ve also added a caveats section that discusses issues with SMP systems, processor affinity, and virtual machines.

Feedback Wanted

How has NYTProf helped you optimize your applications? Let me know.

Perhaps as blog posts of your own, linking back here, or comments here, or CPAN ratings, or email to the mailing list or just to me. I’d love to hear from you.

Thanks!

The next thing you may notice is that statement timings are now nicely formatted with units. Gisle Aas contributed the formatting code for 2.07 but I had to do some refactoring to get it working for the statement timings.

The next thing you may notice is that statement timings are now nicely formatted with units. Gisle Aas contributed the formatting code for 2.07 but I had to do some refactoring to get it working for the statement timings.